How AI Predicts Quality Issues in SPC

- BlogSmarter AI

- Blog

- March 26, 2026

- Updated:

Table of Contents

You’re not in the 1950s anymore, so why are you still relying on outdated quality control methods? Checking charts every 30 minutes and sampling 1 in 50 parts isn’t just slow - it’s expensive. Missed defects, endless scrap, and weeks wasted on root cause analysis are draining your margins.

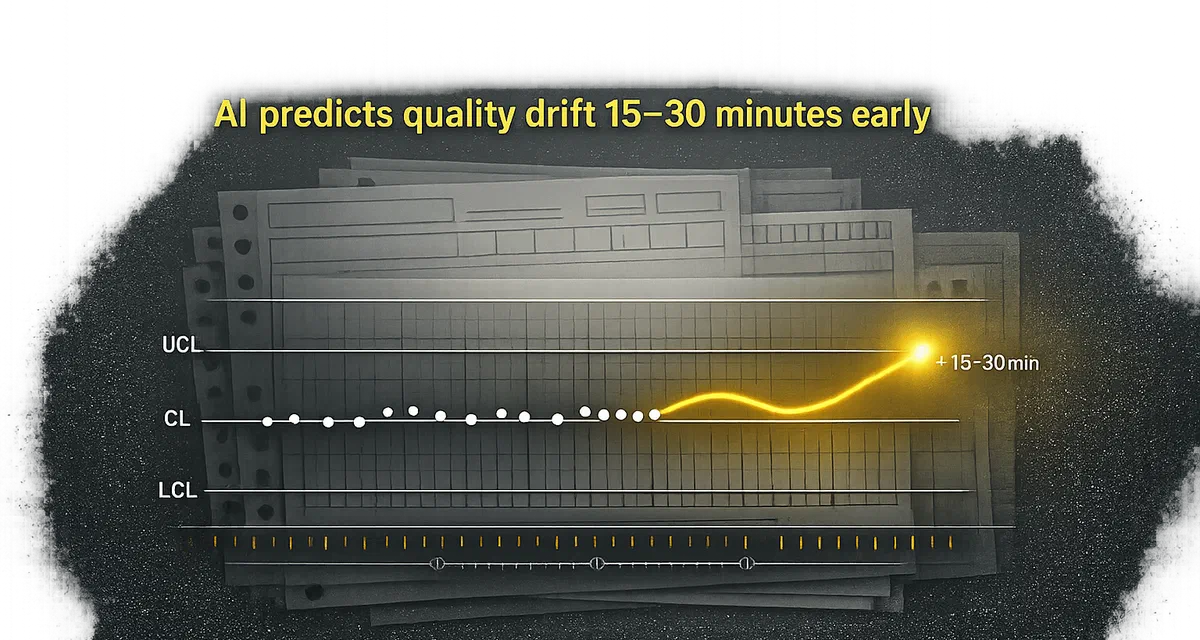

Here’s the fix: AI doesn’t wait for problems to show up - it predicts them. By analysing every data point in real time, AI flags issues 15–30 minutes before your process drifts out of control. No guesswork. No missed trends. Just smarter decisions, faster.

The Old Way vs. The Smart Way

| The Old Way | The Smart Way with AI |

|---|---|

| Manual checks every 30 minutes | Continuous real-time monitoring |

| Sampling 1 in 50 parts | 100% inspection of every unit |

| Reactive problem-solving | Predictive alerts before defects occur |

| Weeks for root cause analysis | Pinpoints issues in hours |

Let’s face it: the old way is costing you money. AI-driven SPC cuts false alarms by 40%, slashes defect rates by 50%, and saves you up to £1 million annually on quality costs.

Now, let’s dig into how this works - and how to get started.

How AI Predicts Quality Problems in SPC

AI Techniques That Improve SPC

AI is transforming Statistical Process Control (SPC) by analysing multiple variables at once, something traditional methods like Shewhart charts struggle with. These older tools focus on one variable at a time, overlooking how factors like temperature, pressure, and material grade interact. AI, however, uses multivariate analysis techniques - such as Gradient Boosting Decision Trees and Random Forests - to uncover the “golden-run” parameter combinations that keep production on track [4].

Advanced methods like time series forecasting (using LSTM networks and ARIMA models) predict future data points, while anomaly detection with autoencoders flags subtle data shifts that standard SPC might miss. Additionally, pattern recognition with Convolutional Neural Networks interprets complex control chart patterns, such as cyclic trends or mixtures, automatically [6]. These innovations have tangible benefits: AI-driven SPC systems can reduce false alarms by over 40% and cut the mean time to detect issues by 30% to 85%, depending on the process [5][6]. Together, these tools enable manufacturers to use historical data to define operational norms and predict deviations before they lead to problems.

How Historical SPC Data Trains AI Models

AI learns what “good” production looks like by analysing historical data from successful runs. The quality of this data is critical, as Jason Chester from Advantive emphasises:

“Artificial Intelligence is only as good as the data it learns from” [4].

If your SPC data is messy - filled with mislabelled entries, missing timestamps, or inconsistent part numbers - the AI won’t filter out the noise; it will amplify it. By feeding the system 3–6 months of clean historical data, such as temperature logs, pressure readings, material properties, and tool wear metrics, the AI can establish a baseline of normal production behaviour [2]. It identifies variable combinations that consistently lead to zero defects, creating a predictive envelope to flag deviations before they escalate.

Companies that prioritised disciplined data collection before implementing AI saw a 45% reduction in the time spent cleaning up data [4]. Once the system is operational, it retrains itself automatically when enough new data is collected, adapting to changing conditions without requiring manual adjustments [7].

Real-Time Monitoring: Turning Data into Alerts

After training, AI moves into real-time monitoring, analysing incoming data from sensors, gauges, and IoT devices. Unlike traditional SPC, which relies on manual checks every 30 minutes, AI evaluates every data point continuously, applying SPC rules to both current and predicted data [7]. If the model forecasts that upcoming measurements will breach control limits, it sends alerts through APIs, email, SMS, or enterprise messaging systems - well before defects occur [7].

This dual-layer approach, combining multivariate statistical tools like Hotelling’s T² with machine learning classifiers, identifies subtle anomalies that single-variable charts might overlook [5][6]. For example, an automotive manufacturer using an AI-powered “SPC 4.0” system improved mean detection times by 85% and reduced manual inspection workloads by 60% [5].

As Jason Chester summarises:

“SPC and AI are not competing technologies. SPC secures the right data at the right moment; AI converts that data into actionable foresight” [4].

How to Implement AI in SPC

Step 1: Digitise and Organise Your SPC Data

For AI to work effectively, your data needs to be clean and standardised. Start by reviewing your current quality records - inspection logs, manual SPC charts, and spreadsheets - to spot gaps, errors, or formats that AI can’t easily process [2].

Next, upgrade your data collection methods. Install sensors like wireless monitors for temperature, pressure, and vibration to switch from manual sampling to continuous data capture [3]. Use industrial protocols such as MQTT, OPC-UA, or Modbus to connect equipment [1]. If you’re working with older machinery, edge gateways can bridge the gap between legacy PLCs and modern sensor systems [8].

Standardising your data is critical. Ensure each record includes essential details like part numbers, revisions, lot numbers, machine IDs, shift information, and precise timestamps [4]. AI models rely heavily on clean data, and fixing these issues at the source can save significant time - some manufacturers have cut manual data-cleaning efforts by 45% [4]. Additionally, you’ll need a robust historical dataset, ideally covering 6–12 months or more, to train your models effectively [2].

Once your data is in order, you can move on to building predictive models based on key process parameters.

Step 2: Build and Train Predictive Models

Clean, well-structured data enables AI models to define baseline production parameters, paving the way for proactive quality control. Feed your system a mix of process parameters (e.g., temperature, pressure, speed), material properties (like composition or moisture levels), and environmental factors (such as humidity or vibration) sourced from your historical records [2]. Experiment with algorithms such as ARIMA, LSTM, and Gradient Boosting, selecting the best-performing one for your needs. Regular retraining - whether weekly, monthly, or triggered by new data - helps the models adapt to evolving conditions [7][6].

For example, in an automotive assembly test, Gradient Boosting achieved an 88% accuracy rate for defect prediction (0.82 F1-score), while CNNs excelled in vision-based tasks with 94% accuracy [5]. Use these models to establish a “golden run” envelope, where the AI identifies parameter combinations that consistently yield defect-free production. Any deviation from these parameters can then be flagged before issues arise [4].

With your models trained, the next step is to deploy them for real-time monitoring.

Step 3: Use AI for Real-Time Monitoring

Once trained, deploy your AI models to continuously evaluate incoming data. These systems analyse every data point in real time, eliminating the need for manual checks. They also apply SPC rules to both current and predicted measurements [7]. If the model predicts that upcoming readings will breach control limits, it sends alerts through APIs, emails, SMS, or enterprise messaging systems - often 2–15 minutes before defects occur [3].

For high-speed production lines, edge hardware can be used to achieve sub-5ms inference times, avoiding delays caused by cloud processing [3]. To enhance accuracy, combine traditional SPC tools, such as Hotelling’s T² charts, with machine learning classifiers. This dual-layer approach reduces false alarms by over 40% and cuts detection times by 30% to 85% [5][6].

Start small by piloting the system on a single high-value or high-scrap production line. Validate the AI’s predictions against actual results before rolling it out across the entire plant [4]. Integrate AI alerts into existing SPC dashboards to ensure a smooth transition - forcing operators to learn a new interface can slow adoption. As Jason Chester from Advantive notes:

“SPC and AI are not competing technologies. SPC secures the right data at the right moment; AI converts that data into actionable foresight” [4].

For metals manufacturers, implementing AI-driven SPC means digitising existing workflows and connecting legacy equipment to modern monitoring systems — turning manual processes into real-time quality insights.

Insights Hub Quality Prediction - Introduction

Old Way vs. New Way: The Impact of AI on SPC

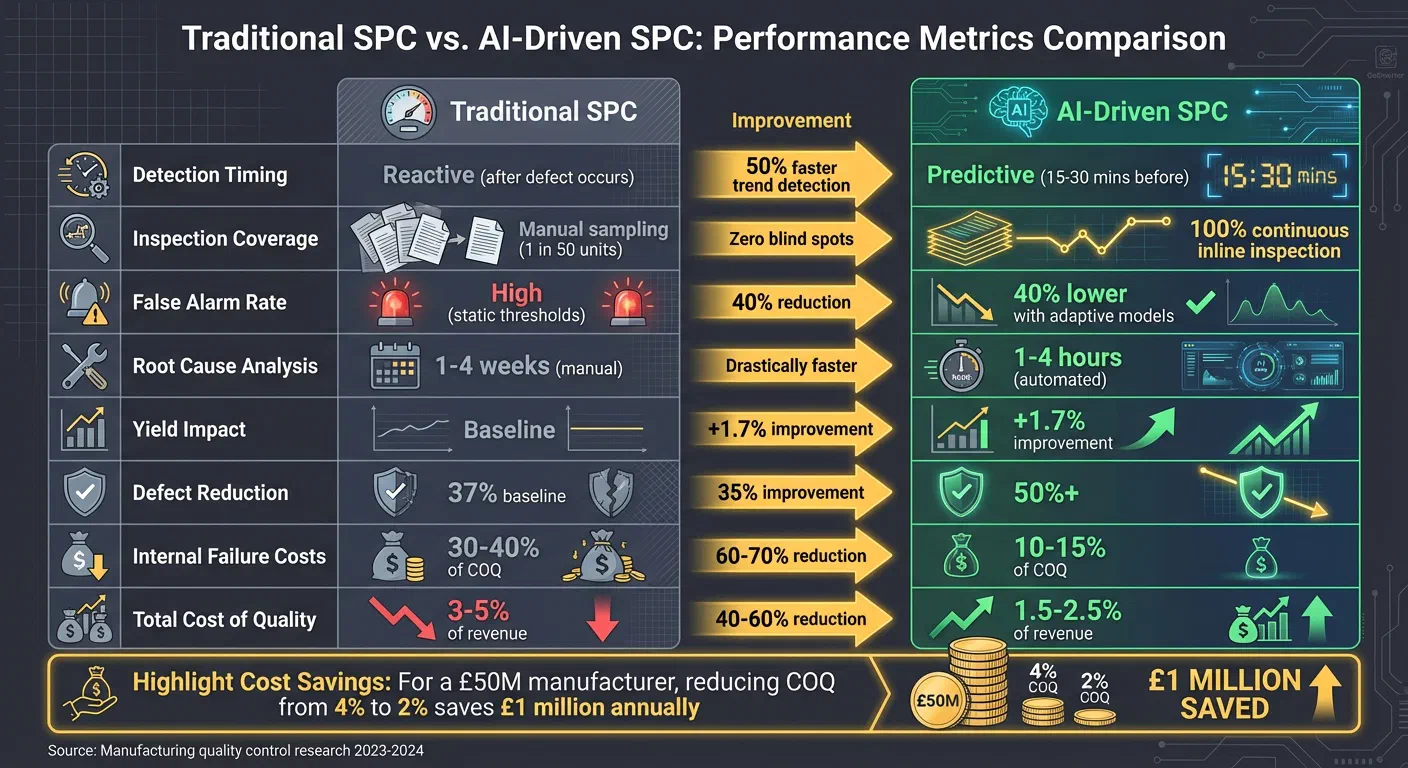

Traditional Statistical Process Control (SPC) has always been a reactive process. Manufacturers rely on manual sampling to spot defects, often discovering issues only after significant damage has been done. AI-driven SPC flips this script entirely. Instead of waiting for defects to appear, it predicts quality issues 15–30 minutes before they happen. By analysing hundreds of process parameters simultaneously and inspecting every unit - not just a sample - AI identifies problems early, preventing them from spiralling into costly mistakes [2][3].

AI systems don’t just predict problems; they’re also smarter about reducing false alarms. Unlike static thresholds used in traditional SPC, AI adapts to changing process conditions, cutting false alarms by 40% [3]. The result? Manufacturers see a 30% faster Mean Time to Detection (MTTD) for process shifts and yield improvements of up to 1.7% in precision manufacturing [9][3]. For a £50 million manufacturer, reducing the Cost of Quality (COQ) from 4% to 2% could save an impressive £1 million annually [2]. And that’s not all - internal failure costs (like scrap and rework) drop by 60–70%, while external failure costs (returns and warranties) fall by 70–80% [2].

The speed of root cause analysis is another game-changer. Traditional methods can take 1–4 weeks to narrow down potential causes; AI does it in just 2–3 hours, evaluating hundreds of variables at once [2]. AI-based vision systems also boost defect detection rates by up to 90%, while increasing throughput by over 25% by allowing operators to focus on real issues instead of chasing false alarms [3][4].

Comparison Table: Traditional SPC vs. AI-Driven SPC

Here’s a side-by-side look at how AI-driven SPC outperforms traditional methods:

| Metric | Traditional SPC | AI-Driven SPC | Improvement |

|---|---|---|---|

| Detection Timing | Reactive (after defect occurs) | Predictive (15–30 mins before) [2][3] | 50% faster trend detection [2] |

| Inspection Coverage | Manual sampling (e.g., 1 in 50 units) | 100% continuous inline inspection [3] | Zero blind spots |

| False Alarm Rate | High (static thresholds) | 40% lower with adaptive models [3] | 40% reduction [3] |

| Root Cause Analysis | 1–4 weeks (manual) | 1–4 hours (automated) [2] | Drastically faster |

| Yield Impact | Baseline | +1.7% improvement [3] | Measurable gain |

| Defect Reduction | 37% baseline [4] | 50%+ [4] | 35% improvement |

| Internal Failure Costs | 30–40% of COQ | 10–15% of COQ [2] | 60–70% reduction [2] |

| Total Cost of Quality | 3–5% of revenue | 1.5–2.5% of revenue [2] | 40–60% reduction [2] |

These numbers make one thing clear: AI isn’t just a tool - it’s a game-changer for SPC in metals manufacturing. But this isn’t about replacing human expertise. It’s about giving quality engineers the tools they need to spot and solve problems before they escalate.

Conclusion

Why AI is the Future of SPC

Traditional SPC methods react to problems after they’ve happened, often documenting defects too late to prevent costly consequences. AI flips this approach on its head, turning SPC into a predictive tool that stops quality issues before they snowball. By analysing hundreds of variables - like temperature, vibration levels, or material lot changes - AI can predict potential quality problems and reduce defect rates by 40–60%. This kind of efficiency slashes overall quality costs by 30–50% [2][3]. For a £50 million manufacturer, that could mean cutting annual quality failure costs from £2 million to £1 million.

Tasks that once took weeks, like root cause analysis, now take hours [2]. False alarms drop by 40% because AI models adjust dynamically to actual operating conditions, unlike traditional static thresholds [3]. And with 100% inline inspection replacing manual sampling, you’re no longer guessing whether one inspected part reflects the entire batch [3]. As Will Jackes from iFactory puts it:

“The shift from reactive to predictive quality isn’t optional - it’s essential” [3].

This marks a major leap forward, moving from outdated reactive quality control to a proactive, data-driven approach.

Take Action: Stop Manual Work

If your SPC process still leans on spreadsheets, manual sampling, and intuition, you’re not just wasting time - you’re losing money. The benefits of AI-driven SPC are clear, and the time to modernise is now.

Start by evaluating your current SPC methods. Clean up your historical data and pilot AI on a production line with high scrap rates [4]. Collaboration is key - pair your data scientists with quality engineers. While AI can point to anomalies, it’s the domain experts who can identify the physical causes [4]. Once your data is in order, AI can transform it into actionable insights, helping you streamline processes and boost production efficiency.